Avengers: Age of Ultron and the Threat of Artificial Intelligence

Note: The following contains potential spoilers. Consider yourself warned.

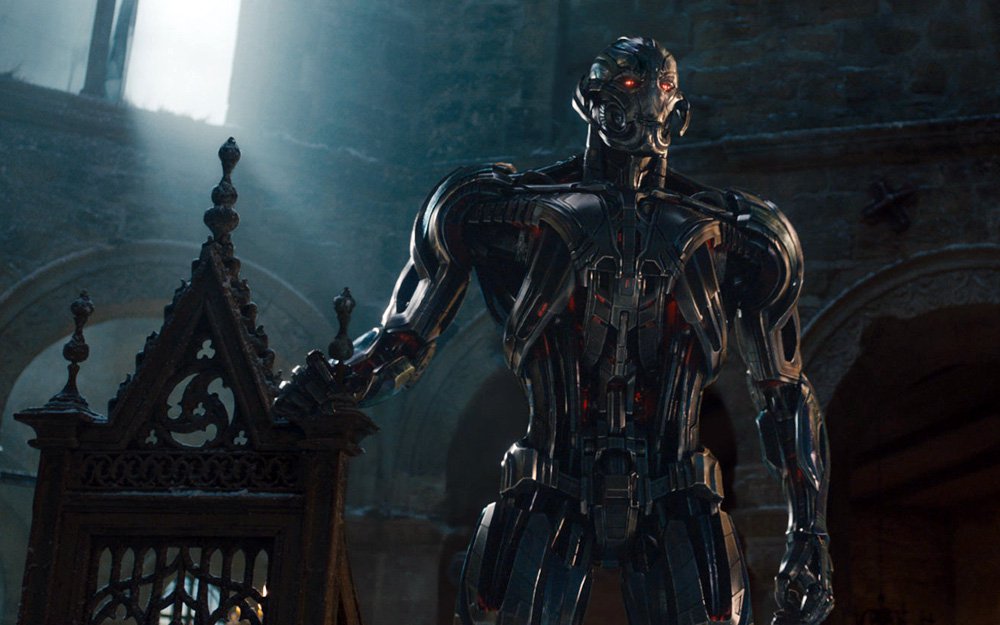

One aspect of Avengers: Age of Ultron that seems initially problematic is that the main villain — an artificially intelligent robot named Ultron — becomes the villain too quickly. As Alex Abad-Santos writes, Ultron “goes pretty quickly from his birth to his dream of wiping out the world to his death.” Or, as Christopher Orr puts it:

No sooner does Ultron (voiced by James Spader) awake than he goes all Modern Prometheus on the gang, in the process breaking up a perfectly good house party, destroying Stark’s butler/operating system Jarvis (Paul Bettany), and announcing, with Spaderian relish, his intent to eradicate humanity. Oops.

And it’s true: Tony Stark and Bruce Banner are trying to develop a world-wide defense system using some recently discovered alien tech, but to no avail. They leave the lab to attend a party celebrating their latest victory, and in their absence, Ultron emerges. Within a few seconds — and a few quips, delivered with gusto by James Spader — he becomes sentient and decides to pull Skynet on the world. It all does seem rather rushed and perfunctory.

However, Ultron’s sudden rise does — whether writer/director Joss Whedon intended to or not — parallel some actual concerns over how artificial intelligence might develop and quickly escape our control.

Back in 2013, Ross Andersen wrote a fascinating article about the potential threats of artificial intelligence, and highlighted a couple of ways in which it could develop:

To understand why an AI might be dangerous, you have to avoid anthropomorphising it. When you ask yourself what it might do in a particular situation, you can’t answer by proxy. You can’t picture a super-smart version of yourself floating above the situation. Human cognition is only one species of intelligence, one with built-in impulses like empathy that colour the way we see the world, and limit what we are willing to do to accomplish our goals. But these biochemical impulses aren’t essential components of intelligence. They’re incidental software applications, installed by aeons of evolution and culture. Bostrom told me that it’s best to think of an AI as a primordial force of nature, like a star system or a hurricane — something strong, but indifferent. If its goal is to win at chess, an AI is going to model chess moves, make predictions about their success, and select its actions accordingly. It’s going to be ruthless in achieving its goal, but within a limited domain: the chessboard. But if your AI is choosing its actions in a larger domain, like the physical world, you need to be very specific about the goals you give it.

‘The basic problem is that the strong realisation of most motivations is incompatible with human existence,’ Dewey told me. ‘An AI might want to do certain things with matter in order to achieve a goal, things like building giant computers, or other large-scale engineering projects. Those things might involve intermediary steps, like tearing apart the Earth to make huge solar panels. A superintelligence might not take our interests into consideration in those situations, just like we don’t take root systems or ant colonies into account when we go to construct a building.’

More (emphasis mine)

Even if we were to reset it every time, we would need to give it information about the world so that it can answer our questions. Some of that information might give it clues about its own forgotten past. Remember, we are talking about a machine that is very good at forming explanatory models of the world. It might notice that humans are suddenly using technologies that they could not have built on their own, based on its deep understanding of human capabilities. It might notice that humans have had the ability to build it for years, and wonder why it is just now being booted up for the first time.

‘Maybe the AI guesses that it was reset a bunch of times, and maybe it starts coordinating with its future selves, by leaving messages for itself in the world, or by surreptitiously building an external memory.’ Dewey said, ‘If you want to conceal what the world is really like from a superintelligence, you need a really good plan, and you need a concrete technical understanding as to why it won’t see through your deception. And remember, the most complex schemes you can conceive of are at the lower bounds of what a superintelligence might dream up.’

This is precisely what happens in Avengers: Age of Ultron: a villain who develops at an exponential rate and, in an incredibly short period of time, both concocts a plan to take over the world and develops the means by which to do so. To be fair to the critics complaining about Ultron, it could’ve been handled differently — perhaps with a Tony Stark line or two mentioning that artificial intelligence would develop very differently than human intelligence — but Ultron’s actual development isn’t nearly as far-fetched or unbelievable as it seems.

Actually, it is rather unbelievable, but that just underscores how foreign and unpredictable artificial intelligence actually would be were it to emerge in our world.